I attended a webinar the other week where the topic was discussing the promise of using AI agents that you can use to ten-fold your capacity. This webinar was arranged by a host who always follows the same pattern in their webinars. They talk about a subject with a fairly high FOMO factor, or presented as “this is HUGE” — you are scared of missing out on something — and yes, at a given point in the seminar, you receive a heavily discounted offer to take a course on the subject being discussed. And by then, they have created so much FOMO that people are very inclined to sign up for a course like this where you get a certificate, and everyone is then happy and has suddenly got a feeling of having an expanded capability. That’s always all well and good, right?

This forced me into deeper thinking about this concept of “Agentic AI.” And yes, it is fantastic for being able to automate things you do. Like, for example, what I am doing right now; recording a voice memo as a draft for a blog post in Swedish, letting an AI transcribe it to text and translate it to English. Then I can publish it, and in that way, I save a fair amount of writing time—which, strictly speaking, I shouldn’t stop doing completely, because then I might lose the ability to actually express myself in writing, even if I don’t believe that [will happen] in the short term.

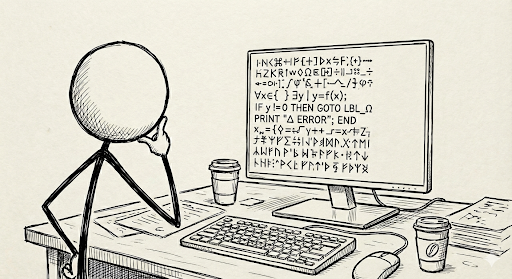

In the webinar as well as in public spaces, there is and has been for quite a while, a lot of talk about how, in these contexts, with the help of AI agents, one should be able to do programming. So, you are essentially replacing the developer with an AI agent and the ability to write code automatically, where they say that English is the new programming language. This is incredibly alluring, and it lowers the barriers for an incredible amount of people who then — given that you pay either, well, 20 or 100 USD a month, depending on which plan you choose to go with on this kind of AI service. In this case, it was about Claude, which is probably, right now at least, the service that perhaps has the best capability when it comes to Agentic AI-supported programming, or vibe coding or what you might call it.

Where they also talk about building apps, they talk about updating web pages, perhaps even creating a web page very quickly and easily, which you even can let the AI agent publish on the web, and then everything is done. The webinar in question was not focused on security; they made that clear right from the start that “we are not talking about security here, we are talking about possibilities.” And sure, you can do that, but it is still the case that in practical application in everyday life, it is inevitably so that you must address the security question as one of the first things you do. Because you can’t just drop a webpage, for example, on the internet and let it be so full of holes and security gaps that it basically becomes unusable.

The entire web is boiling with sniffing and searching for security leaks and holes and it is ongoing today with such speed and intensity that one might almost not understand it. So, you simply have to bring security along with you, all from the start.

Another thing I have thought about is that if everyone starts writing code by speaking English with a prompt, then it will be the case that this will become code that — wherever it is implemented — no one reviews. Then the quality might end up being “so-so.” What is worse is that if you do this in an organisational context, then we will also have a future problem that has an analogy in a story from my own experience that I thought I should recount here:

This was somewhere between, well, 2010 and 2015, when I was assigned as delivery manager for a SharePoint platform (among a slew of other (huge) platforms and systems) at one of Sweden’s largest government agencies. MS SharePoint was implemented as a global intranet solution for the entire organisation and everything was okay with delivery and, of course, too slow progress in development and maintenance.

Note that this was before MS 365 and the cloud paradigm hit the world, so we lived in an on-premise universe.

The phenomenon that started to emerge was that creative employees who ‘cracked the code’ of configuring SharePoint started building such clever pages for their sub-organisations, teams, or departments/units that they created fantastic tools for the business to use. In some cases, these became more or less critical as tools for the local entity to rely on.

This was great, initially; everybody was enthusiastic and development was well anchored in the business. A problem arose though, when these code-cracking individuals suddenly left for another job or a new position, because then there was no one left who knew what they had done to create the tool. When someone later wanted to improve, change, or perhaps repair something that wasn’t quite right, there was no one to take over and solve the problems that arose for the organisation, or for the local department using the sometimes heavily configured SharePoint space.

I had several quite tough conversations and discussions with our internal client, who felt that this was something we, as maintainers of the SharePoint platform, had to solve — that it was our responsibility to take care of business-caused ruptures due to natural changes in the organisation. I replied to the client that we have no idea what the business units are building and what needs it’s based upon. It’s simply not possible for us (as we are organised, based upon our agreed mission) to manage unknown code or unknown configurations that we don’t understand, especially since there is no documentation anywhere. There’s no foundation for these solutions within the application management team at all. We manage the standard platform in an agreed configuration exactly as it is when deployed. We find it very difficult to take responsibility for what users create in terms of user-centric configuration and content. We simply don’t know what the users are doing.

It was considered upsetting that we had such a ‘nonchalant’ attitude towards our ”mission” (obviously a mission with a sliding definition based on mood-at-the-time). But it wasn’t about being anti-customer; it was about being able to take responsibility for something you’ve actually done. And we hadn’t created what the users had created.

So, how does this relate to my opening story about AI agents? I see a risk where AI agents can create, well, ‘sloppy applications’ in a way and on a scale that could become very extensive. If users do this within an organisation, for the organisation, and then move on to a job elsewhere, the organisation is left with an application that is potentially very hard to refactor, configure, or change because no one knows what that employee actually did. It’s quite likely that such an employee is a ”single-point-of-failure-person” — someone who cracks the code much better than everyone else.

This could have potentially dire consequences for businesses that suddenly find themselves with a whole string of possibly broken tools. I don’t know if we really want to see a future of ‘SharePoint all over the place,’ so to speak.

I wonder what you think about this development and how you see it — how should we handle it going forward? I believe businesses should be very careful about allowing app development driven by individuals in a stochastic and spontaneous way across the organisation. We cannot simply replace IT professionals—the traditional coders—with AI agents that are fed with spoken requirements and needs, to bring tools to life. I think we will deeply regret it the day these systems require more thorough maintenance.